Biological Information and Intelligent Design

Dennis Venema examines the claim often made by critics of evolution that information can't be generated through genetic processes.

Earlier this year I took part in a pair of lectures at my university—one where I presented on evolution and was critiqued by a colleague who is a supporter of Intelligent Design (ID) and one where my colleague presented on ID and I offered a critique. After both presentations, the audience was invited to ask questions of either of us. One key topic of discussion at both events was that of biological information: what it is, how it works, and whether it is evidence against evolution and for design.

After one of the talks, I had an extended back-and-forth with a member of the audience who held to an ID perspective. As we conversed about biological information, there came a point in the conversation where I realized he was working with a very literal conception of “information” as it pertained to living things—the reason he thought evolution was wrong and ID was correct was because living things contained written codes that could not be explained by natural processes. At this point in the conversation I asked him if he understood that what we were talking about was in fact organic chemistry—complex and intricate organic chemistry, to be sure, but organic chemistry nonetheless. He was taken aback—he had not thought about biological information in that way before.

Part of the problem, of course, is the way biologists themselves like to speak about “biological information”—we speak about the “genetic code” and use words like “transcription” and “translation” as technical terms to describe how information is processed in cells. When we write out DNA sequences, we do not use the chemical structures of the molecules, but rather abbreviate them with the letters “A” “C” “G” and “T”.

In other words, biologists commonly describe biological information with an extended analogy to one of the ways humans use information: language. This approach has its advantages, of course—but that conversation also revealed its drawbacks to me. When biologists use the term “information,” are they describing a process that is analogous to human language or code or something that is a language or code? From the perspective of my conversation partner, it was clearly the latter, based on his understanding of ID arguments.

ID and the Argument from Information

Within the ID community, the “argument from information” is used in two main ways. The first claim is that the ultimate origin of biological information—i.e., the biological information necessary to produce the first life—must be non-material. If indeed what we see in biology is information, so the argument goes, then it must come from a designing mind. In a 2010 interview discussing his book Signature in the Cell, philosopher of science and ID advocate Stephen Meyer makes this point clearly:

The DNA molecule is literally encoding information into alphabetic or digital form. And that’s a hugely significant discovery, because what we know from experience is that information always comes from an intelligence, whether we’re talking about hieroglyphic inscription or a paragraph in a book or a headline in a newspaper. If we trace information back to its source, we always come to a mind, not a material process. So the discovery that DNA codes information in a digital form points decisively back to a prior intelligence. That’s the main argument of the book…

… But there are some lotteries where the odds of winning are so small that no one will win. And that’s the situation of trying to build new proteins or genes from random arrangements of the subunits of those molecules. The amount of information required is so vast that the odds of it ever happening by chance are miniscule. I make the calculations in the book. There’s a point at which chance hypotheses are no longer credible, and we’ve long since gone past that point when we’re talking about the origin of the information necessary for life.

For Meyer, then, the existence of biological information in living things is prima facie evidence that it was designed, and not the result of a material process.

A second claim used within the ID community is that biological information, as we observe it in present-day organisms, is too complex to be the result of evolutionary processes working to assemble it over time. Put another way, even if the original information had been designed at the origin of life, evolution would not have been able to start from this information and go on to produce new genes and new functions through random mutation and natural selection. Meyer puts the argument this way:

In any case, the need for random mutations to generate novel base or amino-acid sequences before natural selection can play a role means that precise quantitative measures of the rarity of genes and proteins within the sequence space of possibilities are highly relevant to assessing the alleged power of mutation-selection mechanism. Indeed, such empirically derived measures of rarity are highly relevant to assessing the alleged plausibility of the mutation-selection mechanism as a means of producing the genetic information necessary to generating a novel protein fold. Moreover, given the empirically based estimates of the rarity (conservatively estimated by Axe at 1 in 1077 and within a similar range by others) the analysis … pose(s) a formidable challenge to those who claim the mutation-natural selection mechanism provides an adequate means for the generation of novel genetic information — at least, again, in amounts sufficient to generate novel protein folds…

It follows that the neo-Darwinian mechanism — with its reliance on a random mutational search to generate novel gene sequences — is not an adequate mechanism to produce the information necessary for even a single new protein fold, let alone a novel animal form, in available evolutionary deep time.

For Meyer, then, the presence of information in living systems, as well as his claim that natural mechanisms such as evolution cannot account for it, form a major part of his case for Intelligent Design. Information must be provided for life to begin and for new genes and proteins to arise.

So is “biological information” merely an analogy of convenience for biologists, or is what we see in the cell information in the sense of a language or code? Next, we’ll begin to explore how cellular information processing works as a way to begin addressing this question.

Is “biological information” merely an analogy of convenience for biologists, or does the cell contain information in the sense of a language or code? Now we explore the science behind this question and its implications for the arguments of the Intelligent Design movement.

In 1696, British apologist and author John Edwards published a lengthy treatise on Scripture and natural theology – with the descriptive (if rather wordy) title A Demonstration of the Existence and Providence of God From the Contemplation of the Visible Structure of the Greater and Lesser World. As the title suggests, Edwards was on a mission both to convince skeptics and to shore up the faith of believers using what he viewed as the best science of the day. A significant portion of the book attacks heliocentrism – the hypothesis of Copernicus that the sun, rather than the earth, is the center of the universe and that the earth is in motion around the sun – on both scriptural and scientific grounds. These apologetics arguments were doomed to fail, as we know in hindsight. By 1730, convincing empirical evidence for stellar aberration – the effect of a moving earth on incoming starlight – was available and widely viewed as strong evidence for the Copernican view. Edwards’s apologetic, which had seemed so convincing to him in 1696, had had a shelf life of less than 35 years.

A second argument by Edwards, however, fared much better. Edwards was fascinated by the properties of the sun and stars. In particular, he was taken with their seemingly inexhaustible supply of fuel, for which there was no good scientific explanation in his day (pg. 61):

This stupendous Magnitude argues the Greatness, yea the Immensity and Incomphrensiblenes of their Maker. And if it be ask’d, Whence is that Fewel for those vast Fires, which continually burn? Whence is it that they are not spent and exhausted? How are those flames fed? None can resolve these Questions but the Almighty Creator, who bestowed upon them their Being; who made them thus Great and Wonderful, that in them we might read his Existence, his Power, his Providence…

For Edwards, then, the properties of sun and stars were both beyond the reach of human understanding, and evidence for God’s existence – and the lack of scientific explanation was a key feature in his argument. It would not be until the 1920s and 1930s that the idea of nuclear fusion as the energy source for stars would be hypothesized and tested. This argument of Edwards lasted for over 200 years before it was revealed as flawed.

An interesting question to consider is this: should Edwards have made these arguments part of his apologetic? They were, after all, effective for their time and place, and likely supported the faith of many people before they were revealed by science to be inadequate. The latter argument, in particular, remained viable for hundreds of years. Should Edwards have foregone the opportunity to make a case for God with this approach in light of the possibility that future science might render his arguments null and void? If you had been alive in 1696, would you have wanted to know how these arguments would fare over the coming decades and centuries?

Biological Information: Great and Wonderful

If Edwards had been aware in 1696 of the intricate processes that govern information processing in the cell, he likely would have described them as “great and wonderful” as he did the properties of the stars. And indeed, both processes are great and wonderful, and (in my opinion) do offer a signpost toward the existence of their Creator. Where I differ from Edwards, however, is that I do not consider a scientific understanding of either process as a diminishment of such a view. With my limited understanding of nuclear physics and how it plays out in stars, for example, I am amazed that fusion reactions can produce the heavier elements necessary for life. In my mind, understanding the physical process is all the more reason for worship and wonder.

So too with biological information. As a cell biologist and geneticist, I find the details of how cells perform information processing fascinating. Nor do I find potential scientific explanations for the function or origins of these processes threatening to my faith. While there is much that remains for science to discover about this area, I think it is misguided to use that fact as the basis for arguments defending the Christian faith, as some in the Intelligent Design (ID) movement have done.

In order to evaluate those ID arguments, it will be helpful to have a picture of these processes in mind. Let’s sketch out some of the basic details before we discuss what science knows, and doesn’t know, about the function and possible origins of this elegant set of biochemical reactions.

DNA and Proteins: Archive and Actions

One of the first things that students of biology learn is that DNA functions as a hereditary molecule, but protein molecules perform most of the day-to-day jobs that need doing in the cell. These two types of molecules are especially suited for their particular roles, and neither is capable of performing the other’s role. Examining their particular properties reveals why this is the case.

Both DNA and proteins are polymers, which is just the technical way of saying that they are large molecules made up of repeating units (called monomers) joined together. If you’re familiar with LEGO, the toy building bricks, you can imagine a stack of bricks – say, a stack of 4×4 bricks of different colors. If one 4×4 brick is a “monomer”, then a stack of such bricks is a “polymer”. The different colors can represent the different monomers available – which, in our analogy, refers to the four possible monomers for DNA (the famous A, C, G, and T) or the 20 different monomers found in proteins (known as amino acids).

For DNA, the four monomers each have an interesting property: their chemical structure is physically attractive to one of the other monomers. “A”, for example, is the chemical adenine – which is attractive to “T”, or thymine. Here’s what the chemical structures look like:

In this diagram, adenine (A) is on the left, and it is paired up with a thymine (T) on the right. Solid lines indicate chemical bonds (“covalent” bonds) within the molecules. The two dashed lines, however, show attractions that are not covalent bonds, but a weak attraction called a “hydrogen bond”. Think of hydrogen bonds as weak magnetic attractions holding the two molecules in place relative to each other. Similarly there are hydrogen bonds that form between cytosine (C) and guanine (G).

The importance of these attractions is that one polymer of DNA can act as a template to construct a second polymer simply by matching up the monomers one by one as they are added to a growing chain of DNA (a job done by protein enzymes). It is this feature of DNA that makes it very easy to copy accurately – making it an ideal carrier of hereditary information.

In contrast to the mere four monomers of DNA, proteins are made up of 20 monomers – the amino acids. The molecular shapes of amino acids are much more diverse than for DNA monomers, as can be seen in this sampling of the 20:

The functional importance of this diversity is that proteins of many shapes can be constructed from this set of monomers, whereas all DNA pretty much has the same shape (the famous double helix of two complementary polymers wrapped around each other). The diverse shapes of proteins allow them to do all sorts of biological functions – act as cell structural components, function as enzymes to speed up chemical reactions, and so on. Proteins do many things; DNA does one. Each is very well suited to its role, and neither can do the function of the other. DNA cannot take on the myriad of shapes needed for functional roles in the cell; proteins cannot use their monomers to copy themselves and pass on their information, since amino acids do not pair up with a partner in the way DNA monomers do. Both roles are essential for life as we know it: we can’t live without either.

In the next post in this series, we’ll examine the role of RNA – a molecule that acts as a bridge between the information in DNA, and the structure of proteins. As we will see, this molecule acts as a bridge between these two “languages” because it can carry information like DNA, and fold up to take on functional shapes like proteins.

EDITOR’S NOTE: A scientific glossary is provided below this article for those who are unfamiliar with the scientific terms and concepts.

Previously, we explored how DNA functions as a carrier of information. However, it lacks the ability to perform cellular activities, given its inability to form the complex shapes needed to act as enzymes, structural components of the cell, and so on. In contrast, we saw that proteins perform these roles admirably, while themselves lacking the ability to act as a template for their own replication (as DNA does). Proteins are, in a sense, “disposable” entities: once made, they function until they are damaged and recycled by the cell – broken down for the amino acid monomers they contain. They cannot replicate, nor can their structure be used as a template for replication like the structure of DNA. DNA and proteins are thus complementary in function: DNA supplies the information needed to determine the sequence of amino acids to make functional proteins, and proteins do the cellular work that DNA on its own cannot do.

Interposed between these two systems is a third set of large molecules: ribonucleic acids, or RNA. One way to think about RNA is as a single-stranded version of DNA. This is not entirely correct, since RNA uses one monomer unique to itself (uracil, or “U”) in place of the thymidine (or “T”) in DNA. Still, it’s not a bad way of visualizing it. DNA has two strands that wind around each other in the famous “double helix”. As we saw in the earlier, the attraction between the two strands arises from the alignment of atoms that participate in weak attractive bonds called hydrogen bonds.

RNA, since it is single-stranded, does not have an opposing strand with which to form hydrogen bonds. This allows an RNA molecule to form hydrogen bonds between its own monomers– bending and flexing to line up monomers for pairing. The result is a molecule that has both information-bearing properties like DNA, but the ability to take on an array of functional three-dimensional shapes, like proteins. RNA molecules can be unfolded to use their sequence of monomers to specify a copy, and can fold up to do important functions in the cell.

A short, single stranded RNA molecule folded up on itself can form an array of functional 3-D shapes, while retaining its ability to carry information in the sequence of its monomers. The paired sections, which look something like a double helix, are in fact paired sections within one RNA strand.

As it happens, three distinct classes of RNA molecules are essential for transferring the information of DNA into proteins: “messenger RNA”, “transfer RNA” and “ribosomal RNA.” The overall process is straightforward: the sequence of DNA monomers (nucleotides) needs to be transferred to its corresponding sequence of protein monomers (amino acids). Let’s examine the roles of each of these RNA classes in this process in turn.

Messenger RNA: from DNA to working copy

Each chromosome is a very long DNA double helix. This large entity is not suited to easily moving around within the cell; moreover, it also contains the DNA sequence of many proteins (the regions of a chromosome that have sequences for proteins are called protein-coding genes, a term you are likely familiar with). When a cell needs to convert the DNA of a protein-coding gene into a protein, it is copied into a single-stranded RNA version that spans only this one gene. A single-stranded RNA copy of an individual gene is called a messenger RNAs, or “mRNA”. To make the copy, enzymes spread apart the two strands of the chromosomal DNA such that a section of it is now two single-stranded regions, and one of the strands is used as a template to make the strand of RNA. This provides the cell with a “working copy” of a single gene, in RNA form, that can easily move around within the cell. Specifically, mRNAs need to leave the cell nucleus – the internal compartment where chromosomes are found – and be transported out into the cell cytoplasm – where further processing can take place. While mRNAs do have some 3-D structure that is important for their function, they are mainly used to take a copy of the DNA sequence to the place where it can be converted to an amino acid sequence – a large enzyme complex called the ribosome. Since the mRNA and the DNA from which it was copied are both nucleotide sequences, scientists called this process “transcription” when it was discovered. Transcription, as the name implies, is copying a text to make a duplicate in the same language. The process of converting the nucleotide sequence, or “language” into an amino acid sequence, is correspondingly called translation. The next two RNA types are required for this process.

ABOVE: This 3D animation shows how proteins are made in the cell from the information in the DNA code.

Cracking the code

One of the challenges of translation that long puzzled biologists was trying to understand how a sequence of DNA monomers – with four monomer options – was converted into a sequence of amino acids, with 20 options. Indeed, the few monomer types found in DNA led early biologists to conclude that it was far too simple to act as a repository of the vast amounts of information needed by a cell. Later experimental evidence in favor of DNA as the hereditary molecule would have to swim against this current of suspicion.

Once the DNA double helix structure was worked out in the early 1950s, there followed something of a race to elucidate exactly how such a simple structure could specify the complex sequences of amino acids. The structure of DNA immediately answered the “how does it replicate with high accuracy?” question, but failed to reveal how the precise amino acid sequences of proteins were specified.

Since DNA has only four monomers, scientists quickly realized that a simple one-to-one correspondence between one nucleotide and one amino acid would not be the answer – since such a system could only allow for four amino acids in proteins, when 20 were known. Alternative hypotheses were then explored – one of which was that groups of nucleotides could be used to specify a single amino acid. Pairs of nucleotides would thus have 16 possible states (four options for the first and four options for the second, giving 16 total possibilities). Since this is also less than 20, the idea that three nucleotides might specify a single amino acid was investigated. This system allows for 4x4x4 combinations, or 64 in total. While this exceeded the number needed, this hypothesis proved fruitful. Over time, biologists worked out that three nucleotides were indeed used to “code” for a single amino acid. The fact that there were more nucleotide combinations than the 20 required was also explained in time – many amino acids could be coded for by several combinations of nucleotides. For example, the amino acid glycine was found to be coded for by the DNA nucleotide triplets GGA, GGC, GGG, and GGT. The “code” was in fact partially redundant.

A code by any other name

It was also, unsurprisingly, at this time that the “code” analogy for these correspondences entered the biologist’s vocabulary. The race to figure out the links between nucleotide triplets and their resulting amino acid was discussed by scientists as “cracking the biological code” and similar phrases. That this work took place in the 1960s, following on from the successes of Bletchley Park and Ultra in World War Two, and in the midst of the espionage and counterespionage of the Cold War, lent further weight to the metaphor. So apt was this analogy, that many scientists, to say nothing of the media and the public, often did not qualify that this was in fact an analogy. The name given for the nucleotide triplets was “codon”, and to this day biology textbooks speak of the codon table as the “genetic code.”

Code or chemistry?

Though the biologists who did this work used “code” language as an analogy for the complex chemistry they were discovering, it is important to remember that they did not view it as an actual code in the sense of a symbolic system designed by an intelligence. In contrast, the Intelligent Design (ID) movement does view the “genetic code” and its associated chemistry in this way – primarily because they claim that natural processes are not sufficient to explain its origin. Once we’ve examined how this intricate system works, we’ll be in a better position to understand and evaluate that claim.

Is the process by which cells use the information stored in DNA to form proteins complex organic chemistry, an indicator of a designing intelligence, or both? The Intelligent Design movement claims that it is very much both, stating that the genetic code found in cells is in fact a genuine code, like what a human might create. In this series we’re taking a tour through the complex chemistry that cells use to process information in order to understand and evaluate this claim.

In yesterday’s post, we looked at the role of messenger RNA (mRNA) as a means to prepare a gene’s DNA sequence for conversion into an amino acid sequence – a process known as translation. Translation, as we have seen, is the process whereby a nucleotide sequence in mRNA is converted – three nucleotides (one codon) at a time – into an amino acid sequence. This process is accomplished by two other types of RNA – let’s examine their roles.

Bridging the two languages: tRNA

Once the codon “code” was worked out, the next question was how each amino acid was specified by a particular codon. One hypothesis was that some sort of adaptor molecule existed for each codon – a molecule that would both recognize the codon sequence and be physically connected to an amino acid. It was known that the enzyme complex that connected amino acids together was the ribosome, so these adaptors, if they existed, would have to work with the ribosome and the mRNA it was using as a template. Eventually it was shown that these adapter molecules are a different kind of RNA: transfer RNAs, or “tRNAs” for short. In a literal way, they act as a bridge between the “language” of nucleic acids and the “language” of amino acids.

Though mRNA molecules may have some three-dimensional structures important for their function, the structure of tRNA molecules is essential to their role within the cell. Though tRNAs are single-stranded molecules, their nucleotide monomers can still pair up with other monomers using hydrogen bonds – except that they bond with other monomers on their same strand. The result is a single stranded molecule that folds up through base-pairing within itself – producing something that resembles a cloverleaf with three “leaves” protruding from it:

In the image above, the small right hand image shows a line diagram of the single RNA strand that makes up a tRNA. The larger image shows the actual structure of the molecule. The blue “leaf” contains the anticodon: the nucleotide sequence that recognizes and binds to its corresponding codon on the mRNA with hydrogen bonds. The end of the short, single-stranded section (shown in yellow) is where an amino acid will be physically joined to the tRNA. The loading of the proper amino acid onto the correct tRNA is what gives the code its specificity. Amino acid loading is accomplished by protein enzymes that recognize the shape of a particular tRNA, the shape of its corresponding amino acid, and join them together with a chemical bond. Amino acids are present in the cell, floating around, and available for these enzymes to grab and bind on to their correct tRNA molecule. Interestingly, this process, along with the entire transcription / translation system, depends on random, chaotic motion within the cell, a type of motion known as Brownian motion.

Once loaded with amino acids, tRNA molecules can interact with the ribosome. This enzyme complex is where mRNAs and tRNAs meet, and the third class of RNA molecules does its work: ribosomal RNAs, or rRNAs.

The ribosome: a massive rRNA enzyme complex

Let’s look at a “cartoon” version of the ribosome to help us understand its function:

The ribosome serves as a platform for the mRNA, and provides two places where tRNA molecules can enter “slots” and interact with it. An incoming tRNA (brought in through Brownian motion) is stabilized by its anticodon attraction to the codon on the mRNA, and once stabilized, the amino acid it bears is bonded to the amino acid attached to the tRNA on the adjacent slot. This reaction breaks the bond of this amino acid with its tRNA, ejecting that “spent” tRNA from the ribosome. The ribosome then ratchets over one codon, and the process repeats until the amino acid chain – a mature protein – is completed. In this way the ribosome translates the nucleic acid sequence of the mRNA into a specified sequence of amino acids.

It has long been known that the ribosome is a complex of RNA molecules (named “ribosomal RNA” or “rRNA”) and some associated proteins. What was suspected was that the ribosome might in fact be an RNA enzyme, or “ribozyme” and not a protein. Though most enzymes (molecules that favor specific chemical reactions) are proteins, some are RNA molecules.

The idea that the ribosome is a ribozyme was first suggested by proponents of the “RNA world” hypothesis. This hypothesis suggests that present-day, DNA-based life is a modified descendent of RNA-based life. In this proposed model, RNA was both a hereditary molecule and a source of functional, 3-D structures that did enzymatic functions. Only later, so the hypothesis goes, did DNA get added on to act as a hereditary molecule, and proteins take over most enzymatic functions.

One prediction of the RNA world hypothesis was that the ribosome might have retained its RNA-based enzymatic structure. This was spectacularly confirmed in the year 2000, by careful analysis of ribosome structure. These researchers showed that although the proteins in the ribosome stabilize the rRNA molecules, they do not have an enzymatic role.

ID, the ribosome, and the RNA world hypothesis

Those who follow the Intelligent Design literature will know that philosopher and historian of science Stephen Meyer discusses transcription and translation at length in his 2009 book Signature in the Cell. In this book, Meyer attempts to build a case that the “information” we see in living organisms is in fact information in the same sense as a human code or a language. Part of his case involves casting doubt on the RNA world hypothesis, since this hypothesis suggests a material, chemical origin for the genetic code, even if an incomplete one. One of Meyer’s critiques in Signature is that such a hypothesis would have to explain how ribosomes transitioned from using RNA enzymes to using protein ones: Meyer erroneously claims that the enzyme in the ribosome that joins amino acids together is a protein. This is, of course, incorrect, since present-day ribosomes are ribozymes. As we have seen, proponents of the RNA world hypothesis suspected that the ribosome might still be a ribozyme, since its function may have been difficult to transition from an RNA enzyme to a protein one. When, in 2000, the ribosome was definitively shown to be a ribozyme, it was widely seen as a successful prediction for the RNA world position. That Meyer was unaware of this widely-cited and highly-influential work (it would garner the 2009 Nobel Prize in Chemistry, the same year that Meyer’s book appeared) came as quite a surprise to biologists reading Signature, especially given its import for Meyer’s claims.

Despite this blunder, the ID community continues to use the “argument from information” as a key plank in its platform. Next, we’ll begin to sketch out that argument, and discuss its strengths and weaknesses in light of our understanding of how transcription and translation work at the molecular level.

Over the last few posts in this series, we’ve explored how cells store “information” in DNA (as a sequence of DNA nucleotides), and transfer DNA information into sequences of amino acids (i.e. make proteins) that can do the day-to-day jobs needed for running a cell. As we have seen, that process is a highly intricate one, full of complex chemistry. At the heart of the system are tRNA molecules that act as a bridge between an amino acid and its corresponding codon on the mRNA (see image above).

What we see in present-day cells is a complex, integrated system for transferring “information” from DNA to RNA to proteins—using RNA as the key intermediary. Naturally, biologists are interested in the possible origins of this system: how did it come to be? In general, the success of evolution as a theory for how living things have diversified from common ancestors has led scientists to investigate if what we call “life” is the modified descendant of a previous “non-living” system. In other words, if living things are the modified descendants of other life, what happens if you work backwards to the origin of life? Might life come from non-life? Does the information processing system we observe in cells have a natural explanation, or was it created by God in a way we would describe as “supernatural”?

As an aside, as a Christian biologist I would be perfectly fine with the answer being either “natural” or “supernatural”. Both natural and supernatural means are part of the providence of God, and the distinction is not a biblical one in any case. Perhaps God set up the cosmos in a way to allow for abiogenesis to take place. Perhaps he created the first life directly—though, as we will see, there are lines of evidence that I think are suggestive of the former rather than the latter. Similarly, I would have been fine with God supernaturally sustaining the flames of the sun for our benefit, as English apologist John Edwards claimed long ago. I do happen to think that solar fusion is an elegant way to “solve” this problem, and as a person of faith I think it evinces a deeper, more satisfying design than some sort of miraculous interventionist approach for keeping the sun going. I recognize, however, that seeing design in the natural process of solar fusion—or abiogenesis—is not the sort of argument that some Christian apologists are looking for.

Information – a major ID apologetic

So, is the information storage and processing system we see in living things the result of natural processes, or God’s supernatural action? It is precisely on this question that the Intelligent Design (ID) movement has built a significant component of its apologetic. Stephen Meyer, in his book Signature in the Cell, makes two main claims with respect to biological information. The first is that scientists do not, as of yet, have a complete explanation for how biological information arose:

… no purely undirected physical or chemical process—whether those based upon chance, law-like necessity, or the combination of the two—has provided an adequate causal explanation for the ultimate origin of the functionally specified biological information.

Secondly, Meyer claims that specified information always is the result of an intelligence:

I further argue, based upon our uniform and repeated experience, we do know of a cause—a type of cause—that has demonstrated the power to produce functionally specified information from physical or chemical constituents. That cause is intelligence, or mind, or conscious activity… Indeed, whenever we find specified information—whether embedded in a radio signal, carved in a stone monument, etched on a magnetic disc, or produced by a genetic algorithm or ribozyme engineering experiment—and we trace it back to its source, invariably we come to a mind, not merely a material process.

For Meyer, then, biological information is a clear sign of a Designer who used, at least in part, a non-material process to produce it. It should come as no surprise that I do not find this approach convincing, even though I share Meyer’s Christian convictions that God is the creator of all that is, seen and unseen (to paraphrase the creeds).

On Meyer’s first point, we agree. Research on abiogenesis has not, by any stretch, provided “an adequate causal explanation for the ultimate origin of the functionally specified biological information”. Nor will it, in the foreseeable future, if ever. The second point, however, is debatable. The examples Meyer gives are all examples of human-generated information. Yes, humans can generate information. The question, however, is whether a natural system can generate information. We know by direct experience that evolution can produce new information (a topic we will explore in detail in a later post, though it is something I have written about before, several times. Meyer, as we will see, disputes this evidence). If a natural process like evolution can produce new information, then it makes sense to see if other natural processes, perhaps similar to evolution, could have produced the information we see in living systems from non-living precursors.

Is the genetic code really a “code”?

One of the key challenges for abiogenesis research is to explain the origin of the genetic code—how it came to be that certain codons specify certain amino acids. Recall that tRNA molecules recognize and bind codons on mRNA through their anticodons—and bring the correct amino acid for that codon to the ribosome in the process. One feature of the tRNA system is that there is no direct chemical or physical connection between an amino acid and its codon or anticodon. Amino acids are connected to tRNA molecules at the “acceptor stem” section (the yellow region in the above diagram). Moreover, the acceptor stem is the same sequence for every tRNA, regardless of what amino acid it carries. Connecting the proper amino acids to their tRNA molecules is the job of a set of protein enzymes called aminoacyl tRNA synthetases. These enzymes recognize free amino acids and their proper tRNA molecules and specifically connect them together. Because there is no direct interaction between an amino acid and its codon, in principle it seems that any codon could have been assigned to any amino acid. If so, how might this system have arisen without any chemical connections to guide its formation?

Significantly, the lack of a direct chemical or physical connection between amino acids and their codons or anticodons forms a critical part of the Intelligent Design (ID) argument that the “genetic code” is in fact a genuine code of the sort that is determined and manufactured by a designing intelligence, and is not the product of what scientists would call a natural origin. This argument rests on the claim that since there is no physical connection between amino acids and codons (or anticodons) in the present-day system, the “genetic code” is an arbitrary, symbolic code – that the list of codons and their corresponding amino acids are not connected through chemistry. Since there is no connecting chemistry, so the argument goes, then there is no chemical path that could bring the system into being. And if there is no material, chemical process that can bring it into being, then it must have its origin through another means—such as by a designing intelligence that produced it directly, and not through a material process.

Meyer lays out his argument for an arbitrary genetic code on pages 247-248 of Signature (emphases mine).

Self-organizational theories have failed to explain the origin of the genetic code for several reasons. First, to explain the origin of the genetic code, scientists need to explain the precise set of correspondences between specific nucleotide triplets in DNA (or codons on the messenger RNA) and specific amino acids (carried by transfer RNA). Yet molecular biologists have failed to find any significant chemical interaction between the codons on mRNA (or the anticodons on tRNA) and the amino acids on the acceptor arm of tRNA to which the codons correspond. This means that forces of chemical attraction between amino acids and these groups of bases do not explain the correspondences that constitute the genetic code…

Thus, chemical affinities between nucleotide codons and amino acids do not determine the correspondences between codons and amino acids that define the genetic code. From the standpoint of the properties of the constituents that comprise the code, the code is physically and chemically arbitrary. All possible codes are equally likely; none is favored chemically.

Here we can see Meyer’s argument clearly: in order to provide a material explanation for the origin of the genetic code, scientists need to explain how the specific correspondences between codons and amino acids came about. But, as he notes, there is no physical connection between them in the present system that can explain the correspondences. The code is arbitrary—and for Meyer, this indicates design.

Crisps or chips?

Having recently returned from a family vacation in Europe, my kids and I have a new appreciation for this line of argument. Travelling to the UK put our family into a similar, yet distinct linguistic context. Learning the words for various things in the UK was part of the fun. For example, the kids soon learned that if they wanted a bag of potato chips, they needed to ask for “crisps” instead of “chips”—“chips” being what they thought of as “French fries” (though curiously retained in the common UK/North American construction, “fish and chips”). Now, does it matter if a group settles on “chips” or “crisps”? No, not really—what matters is that people know what you are talking about. In principle, any word could be used for thinly sliced and deep-fried potatoes, as long as everyone in the group agreed on what that word meant. Languages thus have an element of arbitrariness to them, and manufactured codes even more so. In fact, a human code benefits from arbitrary associations in that it makes it much harder to crack.

I recall reading Meyer’s argument for an arbitrary code when Signature first came out in 2009, and being surprised by it. The reason for my surprise was simple: in 2009 there was already a detailed body of scientific work that demonstrated exactly what Meyer claimed had never been shown.[1] Though Meyer claimed that “molecular biologists have failed to find any significant chemical interaction between the codons on mRNA (or the anticodons on tRNA) and the amino acids on the acceptor arm of tRNA to which the codons correspond” this was simply not the case.

One hypothesis about the origin of the genetic code is that the tRNA system is a later addition to a system that originally used direct chemical interactions between amino acids and codons. In this hypothesis, amino acids would directly bind to their codons on mRNA, and then be joined together by a ribozyme (the ancestor of the present-day ribosome). This hypothesis is called “direct templating”, and it predicts that at least some amino acids will directly bind to their codons (or perhaps anticodons, since the codon/anticodon pairing could possibly flip between the mRNA and the tRNA).

So, is there evidence that amino acids can bind directly to their codons or anticodons on mRNA? Meyer’s claim notwithstanding, yes—very much so! Several amino acids do in fact directly bind to their codon (or in some cases, their anticodon), and the evidence for this has been known since the late 1980s in some cases. Our current understanding is that this applies only to a subset of the 20 amino acids found in present-day proteins. In this model, then, the original code used a subset of amino acids in the current code, and assembled proteins directly on mRNA molecules without tRNAs present. Later, tRNAs would be added to the system, allowing for other amino acids—amino acids that cannot directly bind mRNA—to be added to the code.

The fact that several amino acids do in fact bind their codons or anticodons is strong evidence that at least part of the code was formed through chemical interactions— and, contra Meyer, is not an arbitrary code. The code we have—or at least for those amino acids for which direct binding was possible—was indeed a chemically favored code. And if it was chemically favored, then it is quite likely that it had a chemical origin, even if we do not yet understand all the details of how it came to be. As such, building an apologetic on the presumed future failings of abiogenesis research, when current research already undercuts one’s thesis, seems to me as problematic for Meyer in 2009 as it did for Edwards in 1696. Do unanswered questions remain? Of course. Should we bank on them never being answered? Or would it be more wise to frame our apologetics on what we know, rather than what we don’t know?[2]

In the next post in this series, we’ll address another of Meyer’s claims: that evolution is incapable of generating significant amounts of new information.

Patterns like this one, resulting from magnetic forces on iron fillings, arise as a result of natural forces. Image available under CC BY-SA 2.0, https://commons.wikimedia.org/w/index.php?curid=166826.

So far, we have examined evidence that strongly suggests that the genetic code had a natural origin. If this is correct, it undermines the Intelligent Design (ID) argument that the genetic code was designed apart from natural processes. To put it plainly, if the genetic code had an origin driven in part by chemical binding events, then it is not a “genuine code” in the sense we humans use the word—and further research might reveal plausible scenarios by which the entire code may have come to be.

When writing that last section, however, I had forgotten that ID proponent Stephen Meyer, along with his colleague Paul Nelson, had written a paper disputing the evidence for direct templating. They published their work in the in-house ID journal BIO-Complexity in 2011. Though I read it shortly after it was published (and I recall finding it unsatisfactory, even then) I did not remember it when writing that last post. This was indeed a mistake—I should have at least mentioned it, or better still addressed its claims. Failing to do so led to the Discovery Institute—the leading ID organization of which Meyer is a key member—calling me out for “recycling arguments refuted years ago”. Well, if nothing else, it made for a good headline I suppose. And yes, I have been using these arguments against Meyer’s position for several years—since in my view, they remain as valid now as they did in 2010 when I first raised them. So, they are “recycled” in that sense. But have Meyer and Nelson truly “refuted” the evidence for chemical interactions between amino acids and codons or anticodons? No, they have not—but it will take some effort to understand why. Since this claim is so important for the ID movement, it’s worth the effort to understand the science, as well as the inadequacy of the ID interpretation of it.

What’s at stake?

The ID movement in general, and Meyer in particular, has staked quite a lot on the assertion that the genetic code is a “genuine code”, in the sense that it represents arbitrary assignments of amino acids to codons. Because of this, advances in research that bear on this assertion are problematic for ID. If science eventually demonstrates a plausible mechanism for the natural origins of the genetic code, ID will lose a major argument of key apologetic importance. As such, it’s not surprising to see science in this area vigorously contested by the ID movement. For them, the genetic code is a code, and codes only come from intelligent coders, as it were.

As a kid, I used to be fond of making my own secret codes by drawing up a table of correspondences between symbols of my choosing and letters of the alphabet. Assigning correspondences between pairs of symbols and letters was of course arbitrary—there was nothing about the symbols and letters that chose themselves. In order to decode messages, the table was necessary, since it could not be worked out from examining the code itself. Meyer sees the genetic code in exactly this way—as a list of amino acids corresponding to certain codons, where in principle any amino acid could be assigned to any codon in an arbitrary fashion. For Meyer, calling the “genetic code” a code is not merely using an analogy to human-designed codes: he sees it as a genuine, symbolic code directly constructed by an agent rather than by a natural process. This thesis forms a major tenet of his 2009 book, Signature in the Cell.

As we have seen in Signature, Meyer claimed in support of his view that chemical interactions between amino acids and codons had never been found. If such interactions were known, so his argument goes, then there might be a case for some sort of natural, chemical process that led to the present-day genetic code. Since no interactions were known, Meyer claimed, there was no support for a natural process that could have formed the code. As such, he argued, the lack of a natural explanation for the genetic code shows that it was directly fashioned by an intelligent agent.

Meyer and Nelson respond to Yarus

In their paper disputing the evidence for amino acid binding, Meyer and Nelson make a series of claims about the work of Yarus and colleagues (who, as we have seen, are a major research group working on the direct templating hypothesis). Meyer and Nelson list their arguments as follows:

- Yarus et al.’s methods of selecting amino-acid-binding RNA sequences ignored aptamers that did not contain the sought-after codons or anticodons, biasing their statistical model in favor of the desired results.

- The DRT model Yarus et al. seek to prove is fundamentally flawed, since it would demonstrate a chemical attraction between amino acids and codons that does not form the basis of the modern code.

- The reported results exhibited a 79% failure rate, casting doubt on the legitimacy of the “correct” results.

- Having persuaded themselves that they explained far more than they actually had, Yarus et al. then simply assumed a naturalistic chemical origin for various complex biochemicals, even though there is no evidence at present for such abiotic pathways.

To summarize, Meyer and Nelson assert there is no need to abandon the claim made in Signature that the genetic code has no natural explanation (and thus, is the direct creation of a designer) for several reasons: the work of Yarus and colleagues is poorly done, and in reality does not show evidence of binding; amino acid binding is not a feature of the modern code, so these proposed mechanisms would not explain the origin of the code in any case; the results have a high rate of failure, so even the claimed positive results are suspect; and Yarus and colleagues do not account for other unexplained problems for hypotheses of abiogenesis in general.

Let’s examine these arguments in detail, starting with the first and third claims.

The first claim seeks to explain away the observed binding of amino acids to their codons or anticodons on short lengths of RNA (aptamers) as merely statistical artifacts of how Yarus and colleagues conducted their experiments. Meyer and Nelson liken the experimental design to fishing with a net, throwing back fish that are not wanted, and then declaring that almost only “wanted” fish were caught in the first place.

This shows a misunderstanding of how Yarus and colleagues actually did their experiments. Sy Garte (who has written for BioLogos and is a biochemist) was quick to point this out to Nelson in the comment thread for the previous post in this series. Yarus and colleagues did indeed examine RNA sequences that did not bind to specific amino acids. As Garte wrote in response to Nelson:

Actually, Yarus in many of his papers, did exactly what you are asking of him here. As one example from his 2003 paper that I linked in my previous comment, he writes: “Of the remaining isolates sequenced, only one other repetitively isolated motif was prevalent, representing 18% of clones. Although it contained a possibly interesting conserved AUAUAUA sequence, this second isolate showed little specificity, having apparently similar affinity for isoleucine, alanine, valine, and methylamine.” Note this second isolate (with no useful specificity) is also based on the ILE codon. In other words, he did look at other enriched sequences, and further evaluated them. He frequently admits that his technique isolates RNAs that are unrelated to amino acid-specific codons or anticodons.

As Garte rightly points out, the experiments that Yarus and colleagues have performed over the years have reported a number of RNA sequences that bind amino acids nonspecifically, or that bind amino acids without containing the codon or anticodon for that amino acid. So, contra to Meyer and Nelson’s claim, Yarus and colleagues did indeed examine a wide variety of RNA sequences that interact with amino acids. Meyer and Nelson are simply incorrect on this point—and as we will see in an upcoming post, these binding affinities have been confirmed by researchers in other groups, increasing our confidence that they are genuine, and not statistical artifacts arising out of biased, sloppy research.

Secondly, the binding results that Yarus and colleagues report are not based on trying to find “desired results” as Meyer and Nelson claim. The Yarus research group is interested in genuinely discovering what amino acids bind to their codons (or anticodons), and which do not. In fact, they fully expect that there are some amino acids which will not exhibit this sort of binding. They also expect that amino acids that do bind one of their codons (or anticodons) will not bind all of their possible codons or anticodons. Recall that the genetic code is partially redundant—most amino acids can be coded for by several codons. Rather, they expect that even the amino acids that were added to the code by chemical binding would later also “pick up” additional codons that they do not bind to. In other words, the direct templating hypothesis is not expected to explain the origin of the entire code, but only a more ancient subset within the current code. That the current code has a subset within it that exhibits specific binding affinities between amino acids and codons (or anticodons) is strong evidence that the current code passed through a stage where these affinities were important. As such, Meyer’s claim that the code is entirely arbitrary, and thus “designed”, fails. The genetic code is not like my childhood codes – some of the “symbols and letters” in the genetic code did seem to “pick themselves” – they paired together because they are chemically attracted to each other.

The idea that direct templating does not purport to explain the origins of the entire code is a point that Meyer and Nelson do not seem to understand. This is most obvious in their third objection in the list above, that “the reported results exhibited a 79% failure rate, casting doubt on the legitimacy of the ‘correct’ results.” In order to understand why Meyer and Nelson are mistaken, we need to understand where the “79%” figure comes from.

The work of the Yarus lab, summarized and discussed in their 2009 paper, examined eight amino acids (of the 20 found in the present-day code) for evidence of binding to their codons or anticodons. Their data reveal that six amino acids show strong evidence of binding to one or more of their codons or anticodons. These six amino acids, however, only show evidence for binding for a subset of their codons or anticodons (Table 1):

Table 1. Yarus et al., 2009 report that six amino acids show evidence of binding to their codons or anticodons. Glutamine and leucine showed no significant interactions in this study. Of the 24 possible codons (and their corresponding 24 possible anticodons) 79% show no evidence of binding.

Due to the redundancy of the genetic code, these eight amino acids have between them 24 possible codons, and thus 24 possible corresponding anticodons. For example, arginine can be coded for by six different codons (with six corresponding anticodons) in the modern code, but only three of those 12 possible sequences show evidence of binding to arginine directly. Thus, for these eight amino acids there are 48 possible sequences that may have shown binding to their amino acid. As you can see from the table, only 10 of the 48 show significant binding—or roughly 21%. That means that 79% of the possible codon or anticodon sequences do not show binding for this sample of eight amino acids. This is what Meyer and Nelson report as the “failure rate” for these experiments. But this is only a “failure rate” if one somehow expects that all 48 sequences should be shown to bind—and it would seem that Meyer and Nelson have exactly this expectation. But this is emphatically not what the direct templating model expects or predicts. Rather, as we have seen, the expectation is that only a subset of the code was established through chemical binding, and that later on other amino acids, codons and anticodons were added to the code. Whether they understand it or not, Meyer and Nelson are refuting a straw-man version of the direct templating model.

Moreover, the claim that the high “failure rate” casts doubt on the veracity of the bindings that were observed is puzzling. If Yarus and colleagues had reported binding affinities for all 24 codons and all 24 anticodons, that would be good reason to suspect that their experimental design was not able to distinguish between real binding and spurious binding. The fact that they observe differences—highly significant binding for some sequences, but not for others—indicates that their experimental design can in principle distinguish between binding and non-binding. So, far from being a problem for Yarus and colleagues—as Meyer and Nelson present it—this is evidence that their experimental design is appropriate and working correctly.

In the next section in this series, we’ll look at more problems with Meyer and Nelson’s response: how the work of Yarus and colleagues is profitably informing the research of other groups, and leading to new discoveries that bear on the direct templating hypothesis.

Eukaryotic Ribosome. Image credit: By Fvoigtsh—Own work, CC BY-SA 3.0, via Wikimedia.

As we have seen above, leaders in the Intelligent Design (ID) movement have developed an argument for design using the genetic code (the correspondences of amino acids and the nucleotide base triplets that specify them). Specifically, they claim that the genetic code is a “genuine code”—i.e. one constructed directly by an intelligent agent—and not a set of correspondences that arose through a natural process. As we have seen, however, this argument has to face strong evidence that part of the genetic code does in fact have its origin through physical interactions between amino acids and their corresponding codons or anticodons. The last post detailed how several amino acids do in fact directly bind to their own codons or anticodons—suggesting that the modern translation system, with its tRNA molecules that bridge amino acids and codons in the present day, is in fact the modified descendent of a translation system that relied on direct interactions. If so, the ID argument falls apart, and one of their major apologetic arguments is lost.

Previously, we saw that the main ID proponents who use the “genetic code is a real code” argument are Stephen Meyer and Paul Nelson. In their 2011 paper attempting to rebut the evidence for direct chemical binding between codons/anticodons and amino acids, one of their main lines of argument was that the observed binding was not genuine, but rather an artifact of poorly-designed experiments. While we have examined why this is not in fact the case for the experiments in question—they were done appropriately, and the results are not spurious—there is a second way to evaluate a body of scientific research done by one specific research group (in this case, the Yarus lab): look to see if it is profitably informing the research of other groups. If other groups are building on the work of another lab, and finding it to make accurate predictions, then we can be even more confident that the results are meaningful.

As the evidence mounted—through the work of Yarus and colleagues—that some amino acids do in fact bind their codons or anticodons, other researchers began to take note. One research group decided to use the results of the Yarus lab to make a prediction that could be tested by examining present-day proteins. They reasoned that if such interactions were important at the time when the translation process was emerging, that these same sorts of interactions may have been important for how complexes of proteins and RNAs worked together at that time. In other words, they reasoned that interactions between amino acids and codons/anticodons might have had other roles in addition to translation—perhaps structural roles. Proteins and RNAs that bound together to perform a function, for example, might have used these same chemical affinities to guide their formation. If so, then examining protein/RNA complexes that are old enough to date from this time in biological history might show evidence of close association between amino acids in the protein component and matching codons/anticodons in the RNA component. But where might an ancient complex of RNA and protein be found that could be used to test this prediction?

Ribosomes: a molecular time capsule

The obvious place to look was the ribosome—the very same RNA/protein complex that cells use for translation. Firstly, the ribosome can be found in all life in the present day, meaning that it is older than the proposed last universal common ancestor of all living things—or “LUCA” for short. As such, the ribosome would have been present at the time the current translation system was worked out. Secondly, the three-dimensional structure of the ribosome is known with great precision through a technique called X-ray crystallography. We know exactly how ribosomes, with their blend of RNA and protein components, are folded together. With these two features, looking at ribosomes was the perfect way to test the hypothesis that early RNA/protein complexes used chemical affinities between amino acids and their codons/anticodons for structural purposes as well as for translation.

The results, published in 2010, were striking. Within the folded structure of ribosomes, several amino acids were found in close association with some of their possible anticodons. Note that within a ribosome, the RNA components are not translated—they are untranslated RNA molecules that act as as a ribozyme, or RNA enzyme. The protein components come from different DNA sequences that are transcribed into RNA and then translated into protein before they join the ribosome complex. As such, the RNA components and the protein components of a ribosome are separate pieces—yet these proteins have some amino acids that are attracted to their anticodon sequences within the RNA components. So, even though these attractions are not useful for translation purposes, they are present within the ribosome structure. These results strongly support the hypothesis that interactions between amino acids and anticodons were biologically important at the time when translation emerged—since they are in large measure determining the three-dimensional structure of what is arguably the most important biochemical complex in life as we know it. Moreover, these results give strong experimental support for the idea that the genetic code was shaped by chemical interactions at its origin and is not a chemically arbitrary code. In response to these results, as well as the prior work by Yarus, a third research group has extended this type of analysis to the protein sets of entire organisms—and found that this pattern of correspondences between amino acids and their codons is widespread across whole genomes. This pattern—first identified by the Yarus group—has now been confirmed by the work of many other scientists, and it continues to make successful predictions.

A second observation from the ribosome study was also informative, but for a different reason. Some amino acids in the ribosome complex are closely associated with anticodons that do not, in the present-day genetic code, code for that amino acid. These anticodons, however, have previously been suspected to once have coded for those amino acids. The genetic code shows evidence of having been optimized through natural selection to minimize the effects of mutation. Such optimization requires some codon/anticodons to be reassigned to different amino acids over time. What was fascinating for the researchers looking at the ribosome was that some codons that were previously suspected to have been reassigned are associated with what were previously thought to be the “original” amino acid in the ribosome complex. This observation provides experimental support for codon reassignment over time: even though an amino acid and a particular codon/anticodon may have a chemical affinity for each other, this affinity could later be overridden by the introduction of tRNA molecules that bridge the amino acid and the codon without direct interaction between them. Despite this reassignment, the original correspondences remain in the ancient structure of the ribosome, where they serve a structural role. As such, this evidence is a window into how the genetic code may have evolved over time: starting with direct affinities, and then shifting to a modified system with tRNA molecules that allowed some of those original pairings to be shifted through natural selection.

In summary, the supposedly “flawed” work of Yarus (as claimed by Meyer and Nelson) is not only being used successfully by other researchers, those other researchers are adding to the evidence that the genetic code (a) has a chemical basis, and (b) has evolved over time. Both of these lines of evidence undermine the ID claim that the genetic code is an arbitrary code directly produced by a designer apart from a natural process.

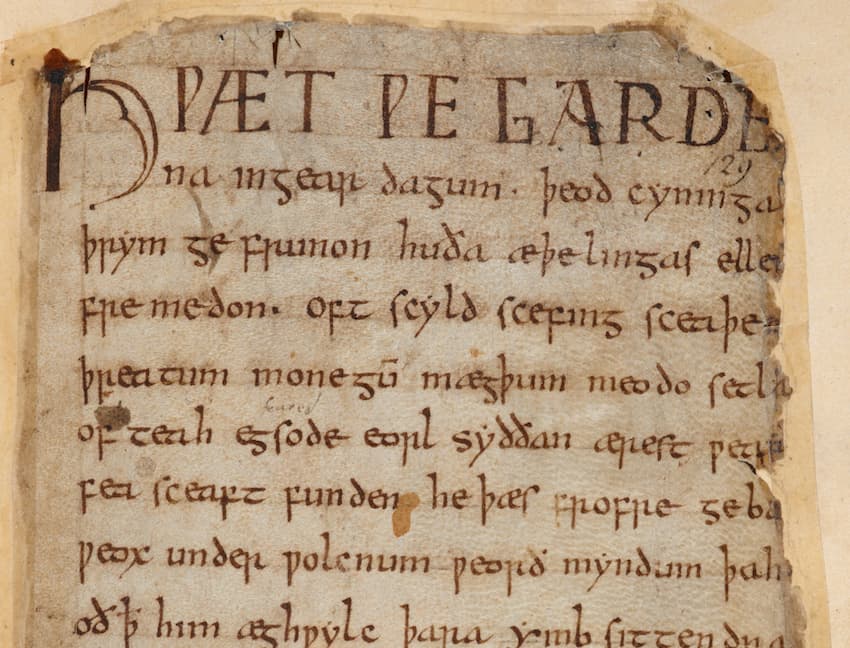

First page of Beowulf in Cotton Vitellius.

We have explored what is known about the ultimate origins of biological information. Although we know little about this subject, the facts we do know point to the genetic code—the mechanical basis for encoding and transmitting biological information—as having an origin shaped by chemical interactions. Accordingly, we have seen how these findings undermine the Intelligent Design (ID) argument that the genetic code was designed apart from a natural process.

A second claim commonly found in the ID literature is that—aside from the ultimate origins of biological information—evolution is unable to generate new information, or at least enough new information to produce the variety of life we observe in the present day. Claims of this nature are also commonly encountered in young-earth creationist (YEC) and old-earth creationist (OEC) circles. Given the prevalence of this claim in anti-evolutionary arguments, ID or otherwise, it’s worth delving into the evidence that evolutionary mechanisms do indeed have the ability to generate adequate amounts of new information to drive significant change over time.

Rarely is information truly “new”, and a little change goes a long way

Before discussing the known mechanisms that can produce new information, it may be helpful to provide some context. Two main points are important here: first, “new” biological information is seldom truly new. Secondly, biologists have good evidence that small amounts of new biological information are adequate to accomplish significant evolutionary change. Let’s examine these issues in turn.

Firstly, evolution is a process of descent with modification. This means that evolutionary change is not about producing “new” or “novel” forms, but rather slightly modifying existing forms. Allowing this process to unfold over millions of years can lead to significant change, to be sure—but from generation to generation within that process, the change is small. One excellent analogy for the gradual changes produced by evolution stacking up over time to accumulate major differences is that of language evolution—an analogy I have explored at length before. While Anglo-Saxon of the 10th century and present-day modern English cannot reasonably be called the “same language”, the process that produced the latter from the former was gradual enough that each generation spoke the “same language” as their parents and their children. So too with evolution—“new information” accumulates over time, usually by modifying what was there before. Even so-called “major innovations” in evolution are accomplished gradually—vertebrates are modified descendants of invertebrates; land animals are modified descendants of fish; whales are modified descendants of land animals, and so on. As such, it’s not reasonable to expect that rapid acquisition of large amounts of new information is needed to drive significant evolutionary change over time. Gradual accumulation will do.

Secondly, even a small accumulation of new information is enough to cause major evolutionary changes. One thing that we now know in this era of DNA sequencing is that species that are quite different from each other can nonetheless have very, very similar information content. A prime example is comparing human DNA with that of our closest living relatives, the other great apes. Human genes and chimpanzee genes, for example, are exceedingly close to one another in their information content and structure. We have only subtle differences at the gene level, by whatever measure one chooses—our genes (which are a small subset of our entire genomes, but the vast majority of the information that makes us up) are around 99% identical to each other. Yet these subtle information differences add up to quite significant biological differences. Many of the information differences between us are due to where and when our genes are active during development—subtle tweaks that ultimately lead to the marked differences we see between our species.

One of the ironies of Christian anti-evolutionary apologetics is that it is common to see groups argue both that humans and apes are hugely different from one another, and that evolution cannot produce significant amounts of new information. Well, one cannot have it both ways, now that we know that humans and other apes are so similar at the genetic level. If humans and apes are really that biologically different—and we are, to be sure!—then one is faced with the brute fact that these major biological differences are underwritten by a level of information change that is easily accessible to evolutionary mechanisms.

With these points in mind, let’s turn to discussing how DNA can change over time to produce new information.

Something old, something new

Darwin’s great insight about evolution was not that species shared common ancestors, but rather that species could be shaped, over time, by their environment to become better suited to it. Nature, he reasoned, could act in the same selective way that humans did to shape a species to a particular form. If populations have variation, and those variants do not reproduce at the same frequency in a given environment, then over time those variants best suited for that environment will increase in frequency, while those less suited will decrease in frequency. In this way, the average characteristics of a population could shift over time.

While Darwin understood these principles, he did not have any idea how variation was generated, or even how hereditary information was transmitted from one generation to the next. The discovery of DNA as the hereditary molecule provided the answers: DNA, in that it is faithfully copied from generation to generation, transmits hereditary information. In rare cases where DNA copying is not perfect, then new variation enters the population. DNA replication then, is both the means of maintaining information and introducing change.

While DNA is great at storing and transmitting information, it is lousy at performing the day-to-day biological functions that cells need to do—enzymatic functions, energy processing functions, and so on. These functions are performed by proteins, which are lousy molecules for storing information, but fantastic molecules for getting biological work done. RNA, as we have seen, is the bridge between these two worlds—a gene, made of DNA, is transcribed into a working copy of RNA called a messenger RNA (mRNA), which is then translated by the ribosome into a protein. The information in a gene—a DNA region—can thus be used to specify how a protein is shaped, and where and when it is made during an organism’s development. The gene regions that specify the protein structure are called coding sequence, and the DNA that specifies where, when, and how often a gene should be transcribed (and translated) are called regulatory DNA. For a given gene, some regulatory DNA is outside of the transcribed region, and some is within it. Biologists often talk about genes being “expressed” as a shorthand for a gene being transcribed into mRNA and translated into a protein. Regulatory DNA, then controls the “pattern of expression” for a gene.

As we can now appreciate, there are several ways for the information content of a gene to change. There could be a DNA sequence change (a mutation) in any part of a gene’s DNA sequence. If a change occurs in the coding sequence, there may be a change in the protein sequence—one altered amino acid, for example—perhaps giving rise to a change in function. If a change occurs in regulatory DNA, then the new variant might have one or more of several possible changes: either an increase or decrease in the amount of RNA that is transcribed, the gene being newly transcribed at times or places it was not transcribed before, or the loss of transcription at times or places where it previously was expressed.

Playing doubles

More dramatic changes in information state are also possible. Deletion mutations can remove a gene in its entirety; or a duplication mutation could produce two copies of a gene, side by side. An interesting effect of duplication mutations is that the two copies sometimes go on to accumulate differences (in their amino acid sequences or in their regulatory DNA, or both) that lead to them acquiring distinct functions. In this way, new functions may develop over time. There are even cases known when entire chromosome sets of an organism were duplicated at once—a so-called “whole genome duplication” or “WGD” event. For example, there is very good evidence that early vertebrates had two WGD events in their lineage—the effects of which can be seen in all vertebrates living today, including humans. While many of the duplicated genes have been lost, others were retained and, over time, picked up sequence changes and functional changes. This greatly added to the information content of the vertebrate lineage.

One possible twist on the “WGD” theme is the case of hybridization—when two related but distinct species interbreed and form fertile progeny. In this case, two species diverge from a common ancestor, and over time, differences in their genes accumulate. This is also a form of information gain over time, except the gain is distributed between two related species/populations. If these two species later interbreed to form a hybrid, then the offspring will inherit one chromosome set from each species.

This situation may not be ideal, if a significant amount of change has accumulated between the two species. It may be the case that the chromosomes from each species may not readily pair with their “equivalent” chromosomes from the other species for the purposes of cell division. If so, then a WGD event may provide a fix to the less than ideal situation—a WGD event occurring after a new hybrid species forms duplicates every chromosome in the genome—giving each chromosome a new, perfectly matched partner to pair with. The end result is a species that has fully two genomes within it—a full genome from each pre-hybridization species. Moreover, the two genomes are already slightly different from one another, meaning that they are already down the path of picking up slightly different functions.

From this starting point, further changes over time are probable—shifting the information state of both ancestral genomes within the “doubled hybrid” species. A recent scientific paper provides an excellent illustration of exactly this process—the discovery that the frog species Xenopus laevis has two complete genomes from two (now extinct) ancestral species—species that were separate from one another for several million years before hybridizing.

Human genome sequence data has also revealed, in recent years, that the lineage leading to modern humans also hybridized with related hominin species in the past—species such as Neanderthals, the Denisovans, and likely others. While modern humans do not retain much of the DNA we picked up from these species, we do nonetheless retain some, and some of it is likely of functional significance for some human groups. We too have shifted our information state by this means.

Taking stock

It’s important to keep in mind that all of these changes in information state are based on well-known and understood mechanisms. We can observe these mechanisms occurring in the present day, and we know—from comparing the DNA of related species to each other—that a small amount of genetic change can bring about significant morphological change. As such, the claim that evolution is incapable of generating the new information needed to drive species change over time is not supported by the evidence, but rather is in stark contrast to it.

Still, one might say—these forms of information change over time merely modify existing information. Even if evolution can get a reasonable amount accomplished with modification, is it the case that evolution cannot create something truly new? As we will see in the next post in this series, evolutionary mechanisms—despite ID claims to the contrary—can and do produce genuinely new information as well.

INTRO BY DENNIS: I am pleased to introduce my friend and colleague Dr. Dan Kuebler to our readership. Dan is a talented biologist and gifted thinker whom I had the pleasure of getting to know over the last two summers as a member of the Scholarship and Christianity in Oxford (SCIO) Bridging the Two Cultures group of scholars. While there, Dan gave a presentation on protein structure and folding as it pertained to claims made by the Intelligent Design (ID) movement, and I invited him to consider writing a post for BioLogos on the subject. As we saw in the last section in this series, the fundamental chemistry of amino acids drives the formation of alpha helices and beta sheets in proteins. This property, as Dan discusses below, contributes to a relatively small number of possible protein folds that seem to occur at a high enough frequency for evolution to find – further showing that ID claims to the contrary are mistaken.

“There is grandeur in this view of life, with its several powers, having been originally breathed by the Creator into a few forms or into one; and that, whilst this planet has gone circling on according to the fixed law of gravity, from so simple a beginning endless forms most beautiful and most wonderful have been, and are being evolved.”

This line, from the concluding paragraph of Darwin’s Origin of Species, is one of the book’s most widely cited passages. Given the theistic implications coupled with the poetic nature of this passage, the frequency of its citation is not surprising. However, if one were to pick a line that most adequately summed up Darwin’s thoughts on the nature of the evolutionary process, it would not be this famous passage. Rather, it would be the lines that immediately precede this passage.